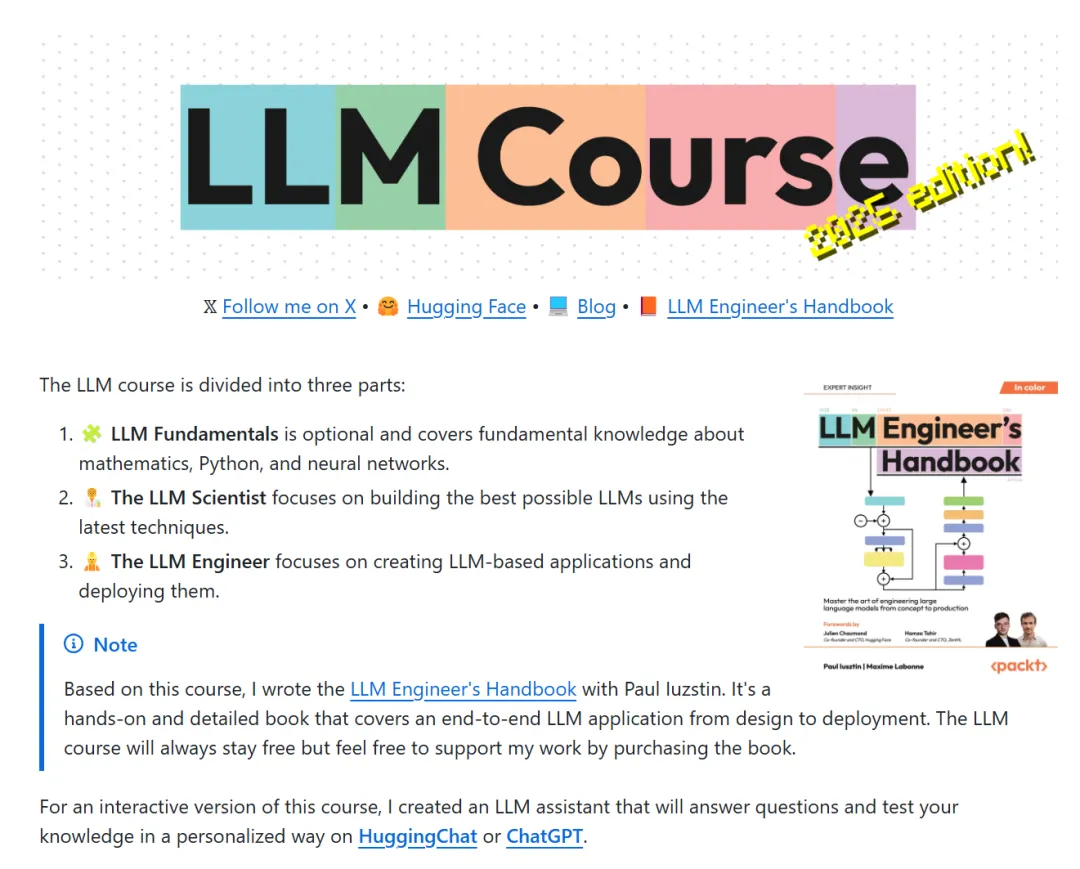

With the rapid development of Large Language Models (LLMs) in various fields, more and more researchers and practitioners are interested in learning how to efficiently train, evaluate, and optimize these complex models. If you are curious about the LLM field and eager to delve into the latest trends and technologies, then the brand-new LLM Course 2025 Edition released by the senior machine learning expert mlabonne is definitely worth checking out!

Course Overview

This course is designed to help learners systematically learn and master the latest technologies and best practices related to LLMs. Through detailed theoretical explanations and practical exercises, learners will gain a deep understanding of key areas such as LLM training, data processing, model evaluation, quantization optimization, and emerging trends.

Course Content

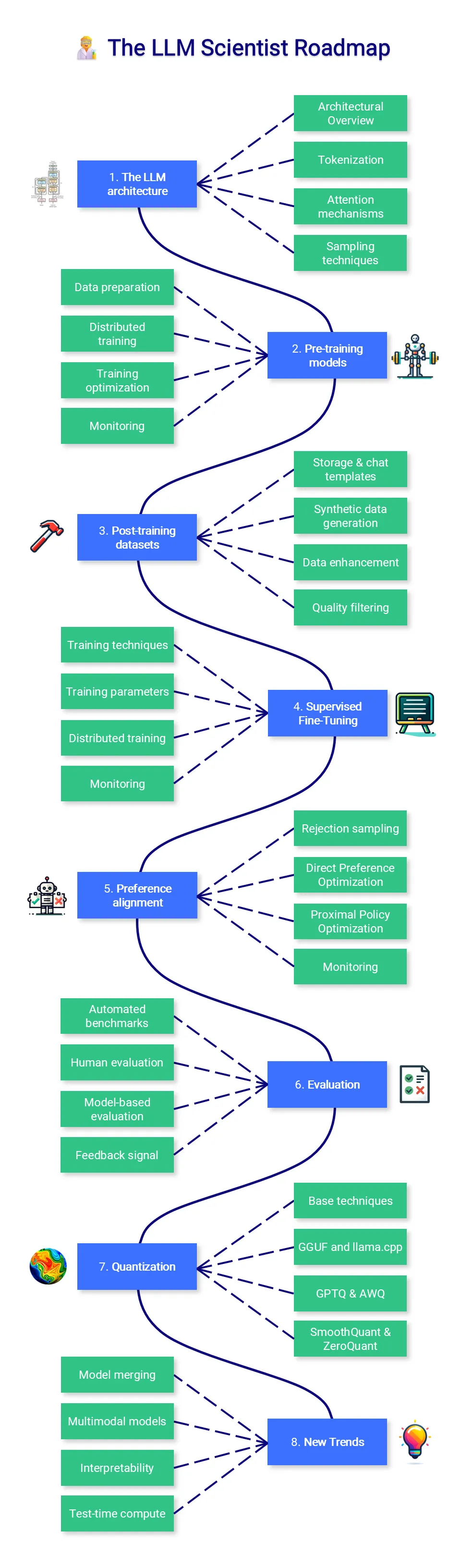

1. LLM Training and Optimization

This section provides a detailed explanation of the training process of large language models and the common challenges they face. The course introduces modern LLM optimization methods to help learners improve training efficiency in different hardware environments. Through practical examples, learners will master the core technologies of model training.

2. Dataset Selection and Construction

In this part, the course recommends commonly used open datasets related to LLM training and guides learners on how to customize datasets based on specific task requirements. It also explains how to effectively process and clean data to prepare high-quality inputs for LLM training.

3. Model Evaluation

This course introduces a range of evaluation methods to help learners understand how to comprehensively measure the performance of LLMs on specific tasks. Emphasis is placed not only on accuracy but also on the robustness, resilience, and interpretability of models.

4. Quantization Techniques

By introducing quantization techniques, the course explains how to compress models, reduce storage and computational overhead, and thus improve deployment efficiency. Learners will learn the latest quantization methods and practices to optimize model resource usage without sacrificing precision.

5. Emerging Trend: Test-Time Compute Scaling

Test-Time Compute Scaling is an emerging trend in the current LLM field. The course delves into how to scale computational resources during the testing phase to handle larger-scale model inference tasks.

Target Audience

Whether you are a beginner entering the LLM field or an experienced practitioner, this course will provide you with ample learning resources. Through systematic learning and practice, you will be able to significantly enhance your professional skills in the LLM domain.

Access

To learn more about the LLM Course 2025 Edition, please visit the following GitHub link:

LLM Course 2025 Edition